Most security teams still shouldn't be building their own tools

There’s a version of the AI moment in security that looks like this: your team spins up Claude, builds a custom threat detection pipeline, ships a SOAR integration, and suddenly you have capabilities that would have taken a year and three engineers to build before.

That version exists. But it applies to maybe 5% of security teams. For everyone else, AI is a force multiplier. But only if you had force to multiply in the first place.

The companies that can build

If you work at a company where engineering is the DNA (i.e. product-led, high-growth, with a team that was already building internal security tooling before AI arrived) then yes, things change significantly.

Prototyping is faster. Internal tools ship in days, not weeks. Workflows that previously required a dedicated engineer can now be handled by a security analyst who knows how to prompt well and validate the output. If that’s your team, you should be building far more now than you ever were before.

Companies like OpenAI, Anthropic, Figma, Notion, Discord; places where engineering isn’t a department. It’s the product. AI amplifies what they already had.

The companies that can’t

If building security products in-house wasn’t realistic for your team yesterday, AI won’t make it realistic tomorrow.

The constraint was never “we don’t have the tools to write code.” It was talent, time, infrastructure, and the organizational appetite for maintaining what gets built. Those constraints haven’t gone away.

A team of three SOC analysts with access to Claude is still a team of three SOC analysts. They can do more. But they’re not suddenly a product engineering team. Companies that were under regulatory pressure yesterday are still under that pressure today. Companies that couldn’t afford to hire senior security engineers still can’t.

Where the line actually sits

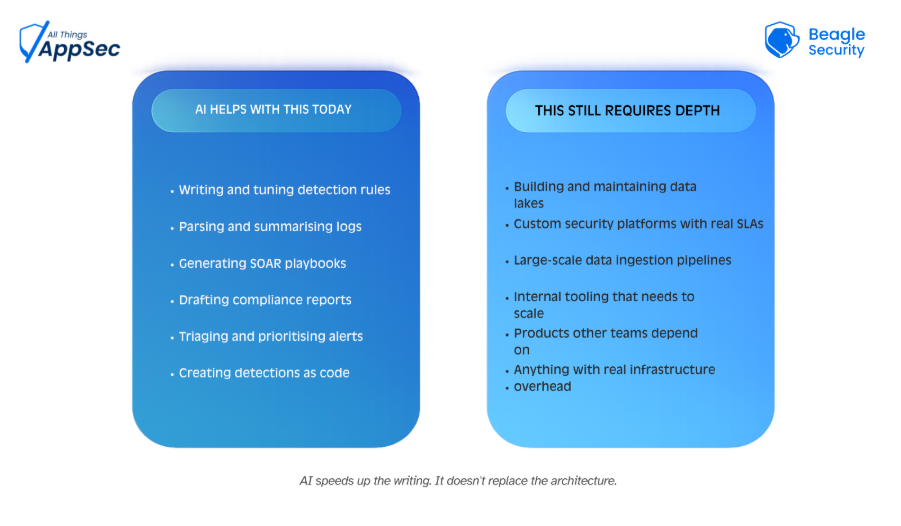

The distinction isn’t about willingness; it’s about what you’re building. There’s a clear line between AI-assisted task automation and building actual security infrastructure.

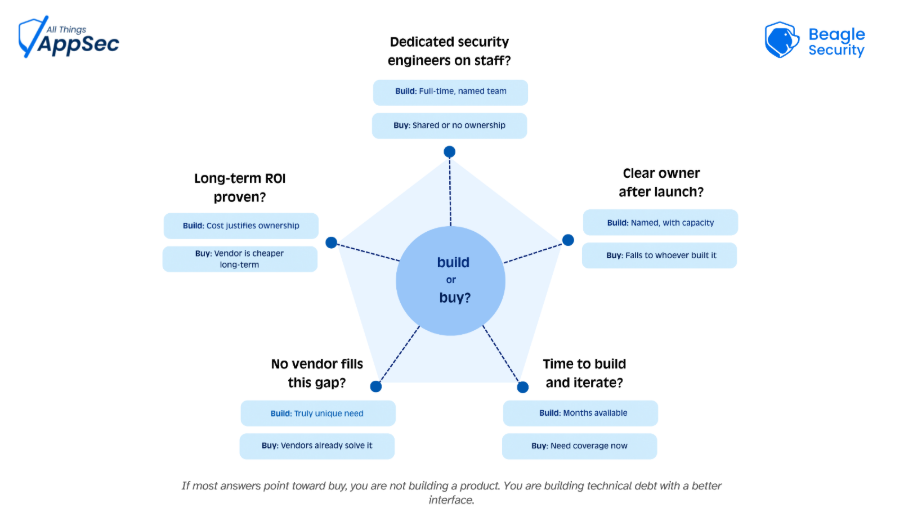

Before you decide to build, ask these five questions

Most teams skip this step. They see what AI can generate and assume that capability equals capacity. It doesn’t. Run through these before committing to building anything serious.

What this means in practice

If you’re evaluating whether to build or buy a security capability, the question isn’t “can AI help us build this?” It almost certainly can. The question is: do you have the team to maintain, iterate, and improve what you build over time?

Use AI for what it’s actually good at in your context. Automate the repeatable work. Generate the boilerplate. Speed up the analysis. But stay honest about where your team’s depth ends and choose vendors who fill the gap well, rather than tools that tempt you into filling it yourself.